Xbox One and PS4 Hardware Updates Could be Disastrous – Here’s Why

The big rumors out of last week's GDC are that both Sony and Microsoft are working on a mid-console-generation upgrade.

Microsoft's Head of Xbox, Phil Spencer,

is proposing "more hardware innovation in the console space," and an end to the era in which "consoles lock the hardware and the software platforms together at the beginning of the generation."

Sony hasn't announced anything formally, but Kotaku has broken the rumor that

there is also a "PS4.5" in the works.

Microsoft's announcement seems more geared towards a general future of the console market, while rumors surrounding Sony have more concrete goals: They want the PS4 to run games in 4K, and to have boosted processing power in order to run resource-hungry games that support the Playstation VR.

Now, we don't know much about this beyond rumors and speculation. There's no telling how exactly this will play out; we could be talking about add-ons to existing hardware, or just a whole new box entirely (e.g. New Nintendo 3DS).

But in either of these cases, we're setting a dangerous precedent.

Microsoft sounds most like they're suggesting incremental hardware upgrades, much in the same way that one upgrades a PC with a new GPU or expanded RAM. We've seen how sexy it is when we start adding attachments and add-ons to consoles. It's nothing new.

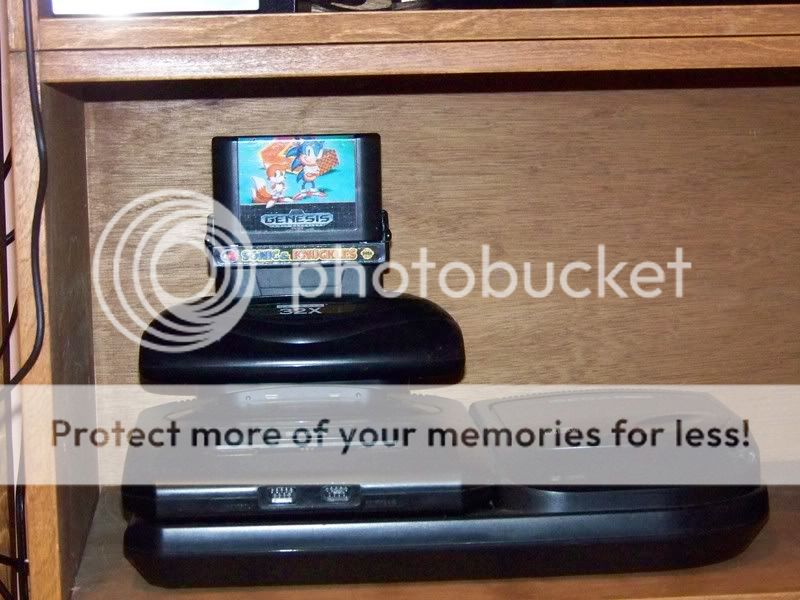

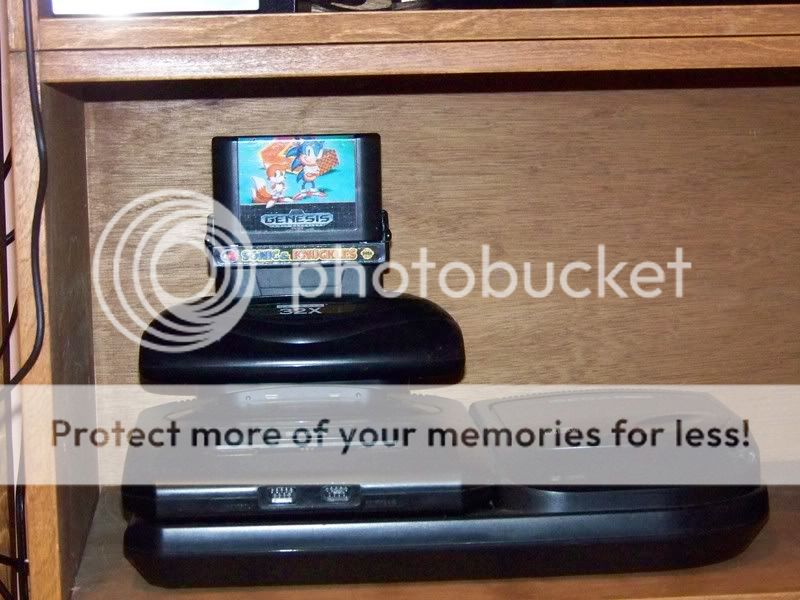

[caption id="" align="alignnone" width="800"]

Ladies and gentlemen, I give you... the upgradable video game console.[/caption]

Is this an unfair comparison? Yeah, of course it is. But it highlights a fatal flaw in the logic driving Sony and Microsoft: this is a slippery slope that leads to the destruction of the console market's biggest advantage over PCs. Ironically, that advantage is the very same "locked hardware" syndrome that Spencer talked about getting away from.

Now, full disclosure, I speak as a long-time console aficionado, who only plays games on PC when he absolutely has to (hello,

Undertale). Maybe my age is showing, or maybe it's my bias, but having the perspective of a console gamer makes this whole situation feel very, very bad.

See, I completely understand that top-end PCs can run video games at higher resolutions, locked framerates, and with all-around better performance...

usually. I play on consoles because I don't have a top-end PC, and find it easier to buy new hardware every 5-7 years rather than keep up with the PC specs arms race.

Am I getting the highest conceivable performance out of my games? Absolutely not. But in return, I can take a game, stick it in my console, and be assured of 100 percent compatibility and optimization immediately, with no work on my part.

[caption id="" align="alignnone" width="1214"]

I'm actually quite comfortable with this paradigm, Mr. Croshaw.[/caption]

I don't have to tinker with settings, or look up error messages for missing .dll files, or install the new version of DirectX, or play on reduced settings because this game stretches beyond the limits of the new GPU that I just bought 8 months ago. I just plug and play. I'm comfortable with that, enough to make a potential reduction in performance worth it.

But now, maybe the console market doesn't give me that comfort anymore. Maybe we're looking at a future in which I need to upgrade my PS4's processor in order to play

Fallout 5 with a steady framerate. Maybe my Xbox One will demand that I buy the GPU add-on before booting up

Destiny 2.

From a developer standpoint, this goes one of two ways.

In one scenario, developers continue to work with the base hardware and optimize it like a normal console generation, largely ignoring the optional upgrades after an initial onslaught of shoehorned "support" for the platform. In this case, everybody who bought in on the upgrades ends up with a largely useless and unsupported piece of expensive hardware

.

[caption id="" align="alignnone" width="800"]

Presented without comment.[/caption]

In the other scenario, developers fall in love with the capabilities of the new hardware upgrades, and start designing games with higher specs and more hardware demand in mind. In this case, it stands to reason that games designed to run on the upgraded platform would run poorly (or not at all) on the base hardware.

And if that's the case, console games become indistinguishable from PC games from a compatibility standpoint.

Let's be clear: it is unlikely that any upgrades to the PS4 and Xbox One are going to put them on par with top-end PCs from the perspective of raw power. So if the goal here is to make up the difference between console and PC in terms of processing power, this is an even more misguided attempt at shaking up the console market.

It's more likely that this is a way to get consumers on board with spending small amounts of money more frequently to keep their consoles current, rather than larger amounts more sporadically to move up to a new console generation.

This makes perfect sense for Microsoft and Sony, who get to boost their hardware sales by offering upgrades to existing console owners, and also keep the price point of their new consoles steady. Meanwhile, they don't have to incur the massive costs in R&D and promotion associated with launching an actual new console.

On the consumer side, Microsoft gets to move Xbox players closer to their vision of seamless cross-play between platforms. Sony gives its system a boost so the demands of the Playstation VR are met without sacrificing core performance.

So the short term, this makes sense to everybody. But how far does this go?

A few years down the road, do we have a version of Xbox One hardware with so many upgraded parts, it no longer plays nice with the Day 1 hardware? Do we have a generation of games that are "exclusive" to a certain hardware upgrade, or are finicky without a certain setup?

If this is the future we're looking at, then I, and other console loyalists, have a decision to make.

With console manufacturers blurring the lines between console and PC, what reason remains to own a console? If both platforms require periodic upgrades, why would you choose the one that is obviously still less powerful?

Sony and Microsoft would do well to consider that question for themselves before they blur the lines so much that they lose what advantages they still have over the PC platform.

Ladies and gentlemen, I give you... the upgradable video game console.[/caption]

Is this an unfair comparison? Yeah, of course it is. But it highlights a fatal flaw in the logic driving Sony and Microsoft: this is a slippery slope that leads to the destruction of the console market's biggest advantage over PCs. Ironically, that advantage is the very same "locked hardware" syndrome that Spencer talked about getting away from.

Now, full disclosure, I speak as a long-time console aficionado, who only plays games on PC when he absolutely has to (hello, Undertale). Maybe my age is showing, or maybe it's my bias, but having the perspective of a console gamer makes this whole situation feel very, very bad.

See, I completely understand that top-end PCs can run video games at higher resolutions, locked framerates, and with all-around better performance... usually. I play on consoles because I don't have a top-end PC, and find it easier to buy new hardware every 5-7 years rather than keep up with the PC specs arms race.

Am I getting the highest conceivable performance out of my games? Absolutely not. But in return, I can take a game, stick it in my console, and be assured of 100 percent compatibility and optimization immediately, with no work on my part.

[caption id="" align="alignnone" width="1214"]

Ladies and gentlemen, I give you... the upgradable video game console.[/caption]

Is this an unfair comparison? Yeah, of course it is. But it highlights a fatal flaw in the logic driving Sony and Microsoft: this is a slippery slope that leads to the destruction of the console market's biggest advantage over PCs. Ironically, that advantage is the very same "locked hardware" syndrome that Spencer talked about getting away from.

Now, full disclosure, I speak as a long-time console aficionado, who only plays games on PC when he absolutely has to (hello, Undertale). Maybe my age is showing, or maybe it's my bias, but having the perspective of a console gamer makes this whole situation feel very, very bad.

See, I completely understand that top-end PCs can run video games at higher resolutions, locked framerates, and with all-around better performance... usually. I play on consoles because I don't have a top-end PC, and find it easier to buy new hardware every 5-7 years rather than keep up with the PC specs arms race.

Am I getting the highest conceivable performance out of my games? Absolutely not. But in return, I can take a game, stick it in my console, and be assured of 100 percent compatibility and optimization immediately, with no work on my part.

[caption id="" align="alignnone" width="1214"] I'm actually quite comfortable with this paradigm, Mr. Croshaw.[/caption]

I don't have to tinker with settings, or look up error messages for missing .dll files, or install the new version of DirectX, or play on reduced settings because this game stretches beyond the limits of the new GPU that I just bought 8 months ago. I just plug and play. I'm comfortable with that, enough to make a potential reduction in performance worth it.

But now, maybe the console market doesn't give me that comfort anymore. Maybe we're looking at a future in which I need to upgrade my PS4's processor in order to play Fallout 5 with a steady framerate. Maybe my Xbox One will demand that I buy the GPU add-on before booting up Destiny 2.

From a developer standpoint, this goes one of two ways.

In one scenario, developers continue to work with the base hardware and optimize it like a normal console generation, largely ignoring the optional upgrades after an initial onslaught of shoehorned "support" for the platform. In this case, everybody who bought in on the upgrades ends up with a largely useless and unsupported piece of expensive hardware.

[caption id="" align="alignnone" width="800"]

I'm actually quite comfortable with this paradigm, Mr. Croshaw.[/caption]

I don't have to tinker with settings, or look up error messages for missing .dll files, or install the new version of DirectX, or play on reduced settings because this game stretches beyond the limits of the new GPU that I just bought 8 months ago. I just plug and play. I'm comfortable with that, enough to make a potential reduction in performance worth it.

But now, maybe the console market doesn't give me that comfort anymore. Maybe we're looking at a future in which I need to upgrade my PS4's processor in order to play Fallout 5 with a steady framerate. Maybe my Xbox One will demand that I buy the GPU add-on before booting up Destiny 2.

From a developer standpoint, this goes one of two ways.

In one scenario, developers continue to work with the base hardware and optimize it like a normal console generation, largely ignoring the optional upgrades after an initial onslaught of shoehorned "support" for the platform. In this case, everybody who bought in on the upgrades ends up with a largely useless and unsupported piece of expensive hardware.

[caption id="" align="alignnone" width="800"] Presented without comment.[/caption]

In the other scenario, developers fall in love with the capabilities of the new hardware upgrades, and start designing games with higher specs and more hardware demand in mind. In this case, it stands to reason that games designed to run on the upgraded platform would run poorly (or not at all) on the base hardware.

And if that's the case, console games become indistinguishable from PC games from a compatibility standpoint.

Let's be clear: it is unlikely that any upgrades to the PS4 and Xbox One are going to put them on par with top-end PCs from the perspective of raw power. So if the goal here is to make up the difference between console and PC in terms of processing power, this is an even more misguided attempt at shaking up the console market.

It's more likely that this is a way to get consumers on board with spending small amounts of money more frequently to keep their consoles current, rather than larger amounts more sporadically to move up to a new console generation.

This makes perfect sense for Microsoft and Sony, who get to boost their hardware sales by offering upgrades to existing console owners, and also keep the price point of their new consoles steady. Meanwhile, they don't have to incur the massive costs in R&D and promotion associated with launching an actual new console.

On the consumer side, Microsoft gets to move Xbox players closer to their vision of seamless cross-play between platforms. Sony gives its system a boost so the demands of the Playstation VR are met without sacrificing core performance.

So the short term, this makes sense to everybody. But how far does this go?

A few years down the road, do we have a version of Xbox One hardware with so many upgraded parts, it no longer plays nice with the Day 1 hardware? Do we have a generation of games that are "exclusive" to a certain hardware upgrade, or are finicky without a certain setup?

If this is the future we're looking at, then I, and other console loyalists, have a decision to make.

With console manufacturers blurring the lines between console and PC, what reason remains to own a console? If both platforms require periodic upgrades, why would you choose the one that is obviously still less powerful?

Sony and Microsoft would do well to consider that question for themselves before they blur the lines so much that they lose what advantages they still have over the PC platform.

Presented without comment.[/caption]

In the other scenario, developers fall in love with the capabilities of the new hardware upgrades, and start designing games with higher specs and more hardware demand in mind. In this case, it stands to reason that games designed to run on the upgraded platform would run poorly (or not at all) on the base hardware.

And if that's the case, console games become indistinguishable from PC games from a compatibility standpoint.

Let's be clear: it is unlikely that any upgrades to the PS4 and Xbox One are going to put them on par with top-end PCs from the perspective of raw power. So if the goal here is to make up the difference between console and PC in terms of processing power, this is an even more misguided attempt at shaking up the console market.

It's more likely that this is a way to get consumers on board with spending small amounts of money more frequently to keep their consoles current, rather than larger amounts more sporadically to move up to a new console generation.

This makes perfect sense for Microsoft and Sony, who get to boost their hardware sales by offering upgrades to existing console owners, and also keep the price point of their new consoles steady. Meanwhile, they don't have to incur the massive costs in R&D and promotion associated with launching an actual new console.

On the consumer side, Microsoft gets to move Xbox players closer to their vision of seamless cross-play between platforms. Sony gives its system a boost so the demands of the Playstation VR are met without sacrificing core performance.

So the short term, this makes sense to everybody. But how far does this go?

A few years down the road, do we have a version of Xbox One hardware with so many upgraded parts, it no longer plays nice with the Day 1 hardware? Do we have a generation of games that are "exclusive" to a certain hardware upgrade, or are finicky without a certain setup?

If this is the future we're looking at, then I, and other console loyalists, have a decision to make.

With console manufacturers blurring the lines between console and PC, what reason remains to own a console? If both platforms require periodic upgrades, why would you choose the one that is obviously still less powerful?

Sony and Microsoft would do well to consider that question for themselves before they blur the lines so much that they lose what advantages they still have over the PC platform.