5 Ways Difficulty Settings in Videogames Are Bad Design

To understand why difficulty settings are sometimes bad design we need to talk about how difficulty impacts player engagement. If a game is too hard, you might say “Hey, I paid $59.99 for this content, how about not giving me a hard time about experiencing all of it?”, but with videogames in particular it’s not as simple as it is with movies or books.

The engagement in videogames can certainly come from the narrative, aesthetic or interactive elements. Even pushing a button can be engaging if that button progresses the story or has some substantial effect on the virtual world. However, I believe that the majority of games deliver engagement through the actual game part of the videogame.

[caption id="attachment_85715" align="aligncenter" width="601"]

And sometimes it's just walking very very slowly.[/caption]

When I say game, I’m referring to the traditional definition of the word, like

Sudoku, Chess or the

Olympics. In traditional games, participants are engaged by overcoming a challenge or an opponent. The engagement comes from a mastery over the rules and abilities which define that game.

With videogames we get the best of both worlds - content interaction and game rules. This is important because each player has to be challenged enough by the game to be entertained, but not so much that they hit a wall.

[caption id="attachment_85716" align="aligncenter" width="400"]

When that boss kills you the seventeenth time.[/caption]

Solving for the entertainment of players of varying skill levels while providing them with a convincingly challenging game is no easy task. A task the solution to which will continue evolve for decades to come. Having said that, there are a number of established approaches to handling this dilemma, but the very worst has to be difficulty settings, here’s why:

5. Deciding Game Difficulty Before Playing the Game is Arbitrary

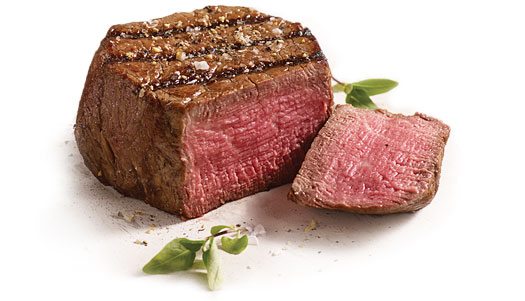

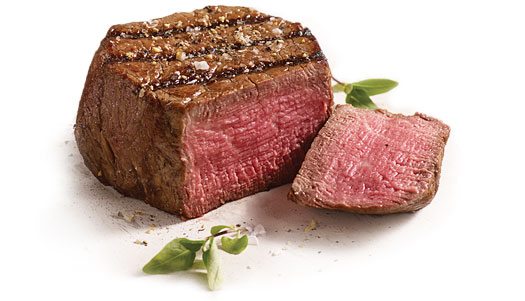

Having to select the flavor of your entire playing experience before getting even a taste is already a huge contradiction of intention. It’s like pouring salt all over your expensive steak before taking a single bite. You might love salt, but you really have no basis on which to judge how salty the chef made the steak in the first place.

[caption id="attachment_85572" align="aligncenter" width="525"]

"Thank you, and would you please fetch my salt bucket?"[/caption]

Some videogames give more information about how these settings change the game than others, but it's all kind of meaningless until you experience it firsthand. These settings can be great for replayability once you're comfortable with the game, but at the outset they only present an uninformed choice that dictates your entire playing experience.

Selecting the wrong difficulty right at the start can sometimes lock you into dozens of hours of playing a game that is boringly easy or frustratingly difficult. This is why many games started putting difficulty settings right in the pause menu, so players can change them at any time, but...

4. Changing the Difficulty Mid-Game is Anti-Immersive

Above all else, games should strive to be immersive. This is achieved by placing the player in a virtual world where their decisions matter and have weight. Where all the options are refined and balanced to encourage compelling interactions with the game world and its characters.

Having one of those options be “change the nature of the entire game at any given moment” throws that refined balance right out the window. Immersion is achieved by trying to get players to believe they are in a world where things matter, so handing them godlike powers while still asking them to believe they’re the underdog is somewhat unreasonable.

[caption id="attachment_85576" align="aligncenter" width="610"]

"Oh shit, is this on easy now?" - Last words of Jeremy the articulate Deathclaw.[/caption]

To pull back the reigns on the godlikeness of those powers, some developers have prototyped various ways to change these settings automatically, without the player’s knowledge. It’s a bold approach, but unfortunately...

3. Adaptive Difficulty Still Has a Long Way to Go

On paper, automated difficulty seems like a really great idea. If the player is really good, throw more of a challenge at them. If they’re inexperienced or unable to play normally, ease up the difficulty to allow them to progress with less overall frustration.

The catch is that this effectively removes certain aspects of what makes a game a game. There is no challenge, because it is essentially all self imposed. Why try harder if you know you’re going to be punished for it instead of rewarded? There are many ways to approach automating difficulty, but it most often has the unfortunate outcome of encouraging sloppy or lazy play. It’s the communism of game design. Each player is rewarded based on their needs and not their merits. Everyone has the same experience, but that experience never rises above the status quo.

[caption id="attachment_85580" align="aligncenter" width="601"]

Stalin hates games that don't change according to the need of the people.[/caption]

I can’t say that automated difficulty is necessarily a bad approach because I’m completely convinced that there’s a way to do it right. With the rise of "

brain wear" technologies, literal mind reading becomes a possibility for videogames in the near future. This technology can close the loop on difficulty automation. Every reaction the player has to the game can be evaluated and used to alter the game in real-time. Whether the player is bored, scared, overwhelmed or excited, the game can use that information to adjust the challenge in an appropriate manner.

2. A Definitive Vision of a Game Beats Several Disparate Ones

When we think of artists and creators, we like to think that they have a specific vision of their work. That their brilliance comes through to us intentionally and not as a result of them throwing things against a wall to see what sticks. This isn’t always the case and even a broken clock is sometimes exactly accurate, but in the best case scenarios we get true pioneers shaping their own vision into something extraordinary.

A game divided into several versions by difficulty can’t usually be said to be a singular vision. It may have started that way before the publisher got hold of it, but in the end, a collection of disparate versions of a game are not the same as a singular vision of the whole. To many players this may not matter at all, but there is something inherently elegant about a game that is consistently challenging and accessible to everyone without having to change its rules.

[caption id="attachment_85632" align="aligncenter" width="600"]

Publisher: "We love the game, but the focus group indicates it's unplayable. Let's throw a kind of 'GOD' ' Mode' in to even things out, ok?"[/caption]

A masterful creator will find opportunities within the game to challenge the player or to provide a helping hand where one is needed. Throwing the entire game under the bus to cash in on accessibility is at best heavy handed and at worst completely game breaking. In the end, any worthwhile game is going to be more challenging when you've just started it. The best game developers find ways to allow the player to grow with the game, not just settle into their comfort zone from start to finish.

1. Having to Curate Your Own Fun Defeats the Purpose

When we buy videogames, we're not just paying for the content on the disc. Somewhat ironically, we're paying for that content to be withheld from us until we accomplish something. But getting all the content at once isn't why we play games. If we wanted to have all the content readily given to us, we'd rent a movie. When we buy videogames we're paying for the privilege of being a player. For the opportunity to become immersed in the game's progression, obstacles and challenges.

Developers spend an incredible amount of time shaping their game world to feel like a consistent and memorable place. They don't make the player choose where to put the boss, what the creatures look like or how the game systems work. They present a persistent environment for the player to explore and discover. Having to ask the player how capable they are and what kind of enemies they would prefer flies in the face of persistent design.

[caption id="attachment_85668" align="aligncenter" width="600"]

Remember what happened last time we got to design the creatures?[/caption]

Incorporating difficulty settings is ultimately lazy design. This is the developer (or more often publisher) telling you that if you think the game is too easy or too hard, it’s your own fault. The creators couldn't be bothered to make a game that works for their entire audience so they split it into pieces, and if you chose the wrong piece to play, that’s entirely on you.

Bonus: Some Alternatives To Difficulty Settings

Now, I wouldn't write fifteen hundred words bashing an approach to game design without offering some alternative examples. The original

Star Fox had three different paths through the game universe that varied in difficulty. There was no menu option to choose which one you wanted to play, in fact, there was no obvious way to select the path at all. In order to get to the harder levels, you have know a

Star Fox's secrets and be able to play the game well enough to access them.

Another good example is PS2's

Matrix: Path of Neo. This game

does have a difficulty settings menu, but the game makes you go through a combat test first. The very first thing in the game is the infamous lobby scene from the first film. Perhaps the most memorable scene from the entire trilogy right at the start. It eases you in by having you fight a few security guards. Before long, and provided you survive; cops, SWAT and eventually even agents come up against you within the first few minutes of playing the game for the first time. By having the player engage the game in an escalating combat trial, both the player and the game know what to expect from one another. The higher difficulties don't open up for novice players while skilled players know exactly where they stand with the game and are able to make an educated decision.

[caption id="attachment_85649" align="aligncenter" width="589"]

Remember the ceiling SWAT guys from that scene? Just kidding, I flipped the pic. Wouldn't that be dumb if they actually did something like that, though?[/caption]

For a truly wide array of incredibly popular, accessible, fun and challenging games that don't rely on difficulty settings, you need look no further than

Nintendo. For over 30 years

Nintendo has released hit after hit without ever falling back on that crutch. Stellar franchises like

Mario,

Zelda,

Metroid,

Kirby,

Donkey Kong,

Pokemon and many more have attracted new audiences to gaming while keeping their longtime fans enticed with difficult and compelling challenges.

It goes to show that accessibility does not require sacrificing cohesiveness. That players can be challenged without having to repeat an entire game over again from the start with harder enemies. That's all I have for today. Do you think I'm completely off-base? Do games have an inherent need for player defined difficulty settings? Let me know in the comments below.

And sometimes it's just walking very very slowly.[/caption]

When I say game, I’m referring to the traditional definition of the word, like Sudoku, Chess or the Olympics. In traditional games, participants are engaged by overcoming a challenge or an opponent. The engagement comes from a mastery over the rules and abilities which define that game.

With videogames we get the best of both worlds - content interaction and game rules. This is important because each player has to be challenged enough by the game to be entertained, but not so much that they hit a wall.

[caption id="attachment_85716" align="aligncenter" width="400"]

And sometimes it's just walking very very slowly.[/caption]

When I say game, I’m referring to the traditional definition of the word, like Sudoku, Chess or the Olympics. In traditional games, participants are engaged by overcoming a challenge or an opponent. The engagement comes from a mastery over the rules and abilities which define that game.

With videogames we get the best of both worlds - content interaction and game rules. This is important because each player has to be challenged enough by the game to be entertained, but not so much that they hit a wall.

[caption id="attachment_85716" align="aligncenter" width="400"] When that boss kills you the seventeenth time.[/caption]

Solving for the entertainment of players of varying skill levels while providing them with a convincingly challenging game is no easy task. A task the solution to which will continue evolve for decades to come. Having said that, there are a number of established approaches to handling this dilemma, but the very worst has to be difficulty settings, here’s why:

When that boss kills you the seventeenth time.[/caption]

Solving for the entertainment of players of varying skill levels while providing them with a convincingly challenging game is no easy task. A task the solution to which will continue evolve for decades to come. Having said that, there are a number of established approaches to handling this dilemma, but the very worst has to be difficulty settings, here’s why:

"Thank you, and would you please fetch my salt bucket?"[/caption]

Some videogames give more information about how these settings change the game than others, but it's all kind of meaningless until you experience it firsthand. These settings can be great for replayability once you're comfortable with the game, but at the outset they only present an uninformed choice that dictates your entire playing experience.

Selecting the wrong difficulty right at the start can sometimes lock you into dozens of hours of playing a game that is boringly easy or frustratingly difficult. This is why many games started putting difficulty settings right in the pause menu, so players can change them at any time, but...

"Thank you, and would you please fetch my salt bucket?"[/caption]

Some videogames give more information about how these settings change the game than others, but it's all kind of meaningless until you experience it firsthand. These settings can be great for replayability once you're comfortable with the game, but at the outset they only present an uninformed choice that dictates your entire playing experience.

Selecting the wrong difficulty right at the start can sometimes lock you into dozens of hours of playing a game that is boringly easy or frustratingly difficult. This is why many games started putting difficulty settings right in the pause menu, so players can change them at any time, but...

"Oh shit, is this on easy now?" - Last words of Jeremy the articulate Deathclaw.[/caption]

To pull back the reigns on the godlikeness of those powers, some developers have prototyped various ways to change these settings automatically, without the player’s knowledge. It’s a bold approach, but unfortunately...

"Oh shit, is this on easy now?" - Last words of Jeremy the articulate Deathclaw.[/caption]

To pull back the reigns on the godlikeness of those powers, some developers have prototyped various ways to change these settings automatically, without the player’s knowledge. It’s a bold approach, but unfortunately...

Stalin hates games that don't change according to the need of the people.[/caption]

I can’t say that automated difficulty is necessarily a bad approach because I’m completely convinced that there’s a way to do it right. With the rise of "brain wear" technologies, literal mind reading becomes a possibility for videogames in the near future. This technology can close the loop on difficulty automation. Every reaction the player has to the game can be evaluated and used to alter the game in real-time. Whether the player is bored, scared, overwhelmed or excited, the game can use that information to adjust the challenge in an appropriate manner.

Stalin hates games that don't change according to the need of the people.[/caption]

I can’t say that automated difficulty is necessarily a bad approach because I’m completely convinced that there’s a way to do it right. With the rise of "brain wear" technologies, literal mind reading becomes a possibility for videogames in the near future. This technology can close the loop on difficulty automation. Every reaction the player has to the game can be evaluated and used to alter the game in real-time. Whether the player is bored, scared, overwhelmed or excited, the game can use that information to adjust the challenge in an appropriate manner.

Publisher: "We love the game, but the focus group indicates it's unplayable. Let's throw a kind of 'GOD' ' Mode' in to even things out, ok?"[/caption]

A masterful creator will find opportunities within the game to challenge the player or to provide a helping hand where one is needed. Throwing the entire game under the bus to cash in on accessibility is at best heavy handed and at worst completely game breaking. In the end, any worthwhile game is going to be more challenging when you've just started it. The best game developers find ways to allow the player to grow with the game, not just settle into their comfort zone from start to finish.

Publisher: "We love the game, but the focus group indicates it's unplayable. Let's throw a kind of 'GOD' ' Mode' in to even things out, ok?"[/caption]

A masterful creator will find opportunities within the game to challenge the player or to provide a helping hand where one is needed. Throwing the entire game under the bus to cash in on accessibility is at best heavy handed and at worst completely game breaking. In the end, any worthwhile game is going to be more challenging when you've just started it. The best game developers find ways to allow the player to grow with the game, not just settle into their comfort zone from start to finish.

Remember what happened last time we got to design the creatures?[/caption]

Incorporating difficulty settings is ultimately lazy design. This is the developer (or more often publisher) telling you that if you think the game is too easy or too hard, it’s your own fault. The creators couldn't be bothered to make a game that works for their entire audience so they split it into pieces, and if you chose the wrong piece to play, that’s entirely on you.

Remember what happened last time we got to design the creatures?[/caption]

Incorporating difficulty settings is ultimately lazy design. This is the developer (or more often publisher) telling you that if you think the game is too easy or too hard, it’s your own fault. The creators couldn't be bothered to make a game that works for their entire audience so they split it into pieces, and if you chose the wrong piece to play, that’s entirely on you.

Remember the ceiling SWAT guys from that scene? Just kidding, I flipped the pic. Wouldn't that be dumb if they actually did something like that, though?[/caption]

For a truly wide array of incredibly popular, accessible, fun and challenging games that don't rely on difficulty settings, you need look no further than Nintendo. For over 30 years Nintendo has released hit after hit without ever falling back on that crutch. Stellar franchises like Mario, Zelda, Metroid, Kirby, Donkey Kong, Pokemon and many more have attracted new audiences to gaming while keeping their longtime fans enticed with difficult and compelling challenges.

It goes to show that accessibility does not require sacrificing cohesiveness. That players can be challenged without having to repeat an entire game over again from the start with harder enemies. That's all I have for today. Do you think I'm completely off-base? Do games have an inherent need for player defined difficulty settings? Let me know in the comments below.

Remember the ceiling SWAT guys from that scene? Just kidding, I flipped the pic. Wouldn't that be dumb if they actually did something like that, though?[/caption]

For a truly wide array of incredibly popular, accessible, fun and challenging games that don't rely on difficulty settings, you need look no further than Nintendo. For over 30 years Nintendo has released hit after hit without ever falling back on that crutch. Stellar franchises like Mario, Zelda, Metroid, Kirby, Donkey Kong, Pokemon and many more have attracted new audiences to gaming while keeping their longtime fans enticed with difficult and compelling challenges.

It goes to show that accessibility does not require sacrificing cohesiveness. That players can be challenged without having to repeat an entire game over again from the start with harder enemies. That's all I have for today. Do you think I'm completely off-base? Do games have an inherent need for player defined difficulty settings? Let me know in the comments below.